DPO (Direct Preference Optimization) LoRA for XL and 1.5 - OpenRail++ image by halilozbasak

Created by

Resources used

DPO (Direct Preference Optimization) LoRA for XL and 1.5 - OpenRail++

Related tags

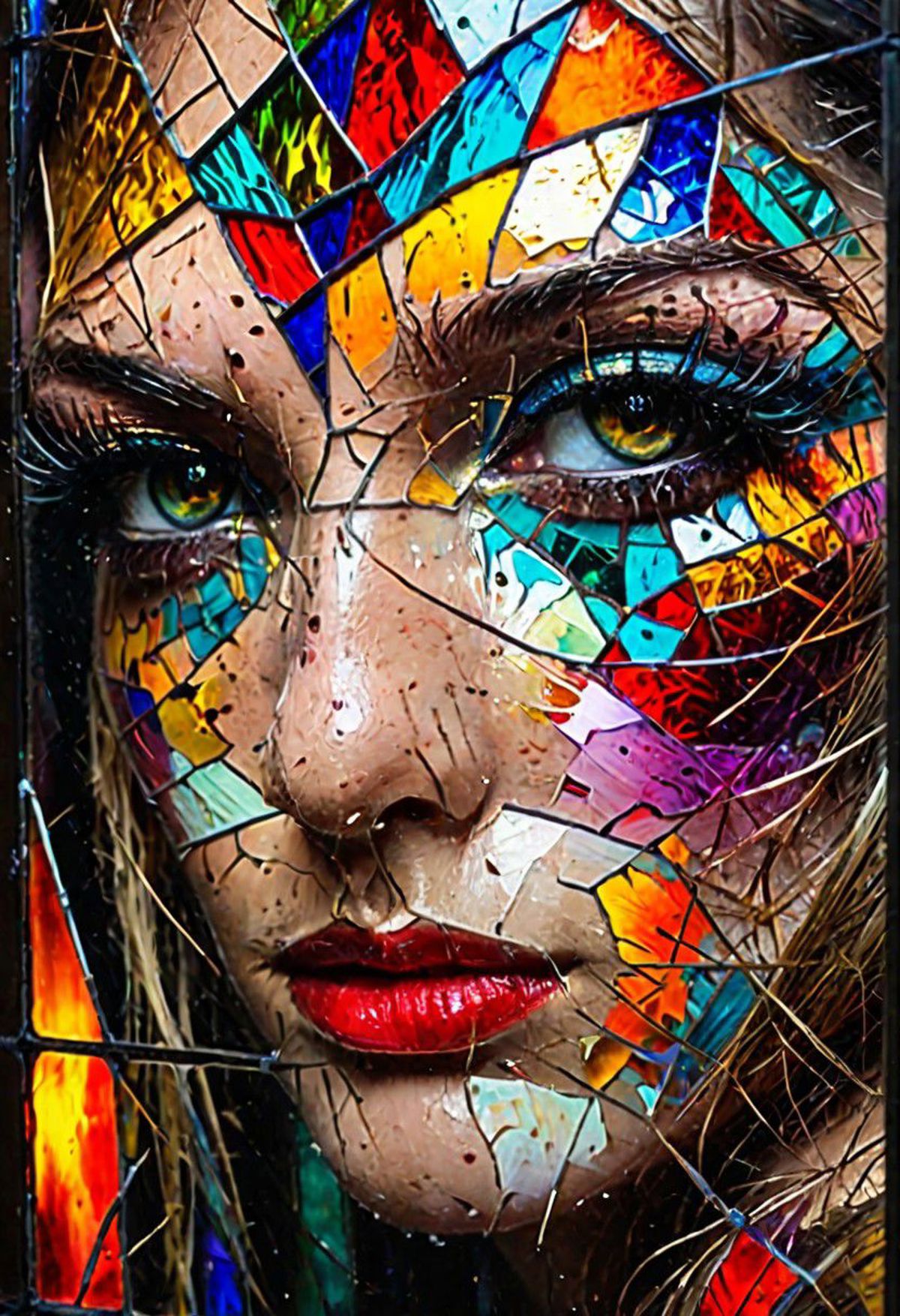

RAW photo

beautiful eyes

macro shot

masterpiece

peeking from behind stained glass

colorful details

award winning

high detailed

8k

natural lighting

analog film

detailed skin

amazing composition

intricate details

subsurface scattering

velus hairs

amazing textures

filmic

chiaroscuro

soft light realisticvision-negative-embedding

Image information

Size: 832 x 1216

AI Model: DPO (Direct Preference Optimization) LoRA for XL and 1.5 - OpenRail++

Created date: January 20, 2024, 3:41 AM

Picture ID: 65b95ebbf72540489123c6d1